What is Kubernetes?

Kubernetes这个单词来自于希腊语,含义是 舵手 或 领航员

简介说明

Production-Grade Container Orchestration Automated container deployment, scaling, and management

生产级的容器编排 自动化的容器部署、扩展和管理

Kubernetes,也称为K8S,其中8是代表中间“ubernete”的8个字符,是Google在2014年开源的一个容器编排引擎,用于自动化容器化应用程序的部署、规划、扩展和管理,它将组成应用程序的容器分组为逻辑单元,以便于管理和发现,用于管理云平台中多个主机上的容器化的应用,Kubernetes 的目标是让部署容器化的应用简单并且高效,很多细节都不需要运维人员去进行复杂的手工配置和处理;

Kubernetes拥有Google在生产环境上15年运行的经验,并结合了社区中最佳实践;

K8S是 CNCF 毕业的项目,本来Kubernetes是Google的内部项目,后来开源出来,又后来为了其茁壮成长,捐给了CNCF;

CNCF全称Cloud Native Computing Foundation(云原生计算基金会)

官网:https://kubernetes.io/

代码:https://github.com/kubernetes/kubernetes

Kubernetes是采用Go语言开发的,Go语言是谷歌2009发布的一款开源编程语言;

编排是什么意思?

按照一定的目的依次排列;

调配、安排;

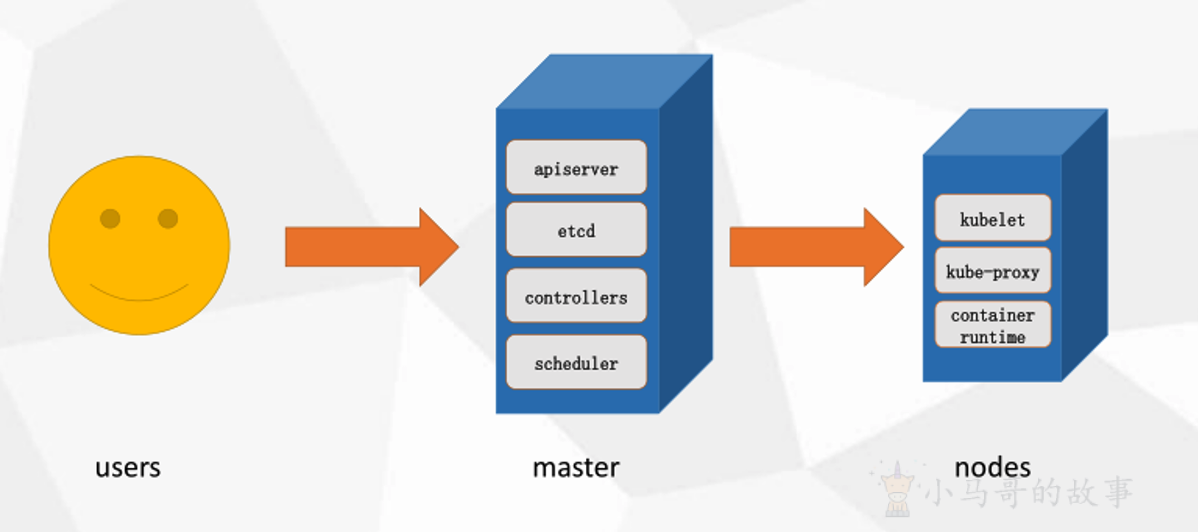

整体架构

Master

k8s集群控制节点,对集群进行调度管理,接受集群外用户去集群操作请求;

Master Node 由 API Server、Scheduler、ClusterState Store(ETCD 数据库)和 Controller MangerServer 所组成;

Nodes

集群工作节点,运行用户业务应用容器;

Nodes节点也叫Worker Node,包含kubelet、kube proxy 和 Pod(Container Runtime);

搭建方式

部署 Kubernetes 环境(集群)主要有多种方式:

- minikube

minikube可以在本地运行Kubernetes的工具,minikube可以在个人计算机(包括Windows,macOS和Linux PC)上运行一个单节点Kubernetes集群,以便您可以试用Kubernetes或进行日常开发工作;

https://kubernetes.io/docs/tutorials/hello-minikube/

- kind

Kind和minikube类似的工具,让你在本地计算机上运行Kubernetes,此工具需要安装并配置Docker;

https://kind.sigs.k8s.io/

- kubeadm

Kubeadm是一个K8s部署工具,提供kubeadm init 和 kubeadm join两个操作命令,可以快速部署一个Kubernetes集群;

官方地址:

https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/install-kubeadm/

- 二进制包

从Github下载发行版的二进制包,手动部署安装每个组件,组成Kubernetes集群,步骤比较繁琐,但是能让你对各个组件有更清晰的认识;

- yum安装

通过yum安装Kubernetes的每个组件,组成Kubernetes集群,不过yum源里面的k8s版本已经比较老了,所以这种方式用得也比较少了;

- 第三方工具

有一些大神封装了一些工具,利用这些工具进行k8s环境的安装;

- 花钱购买

直接购买类似阿里云这样的公有云平台k8s,一键搞定;

Kubeadm部署

kubeadm是官方社区推出的一个用于快速部署 kubernetes 集群的工具,这个工具能通过两条指令完成一个kubernetes集群的部署;

-

创建一个Master节点:

kubeadm init -

将Node节点加入到Master集群中:

$ kubeadm join <Master节点的IP和端口>

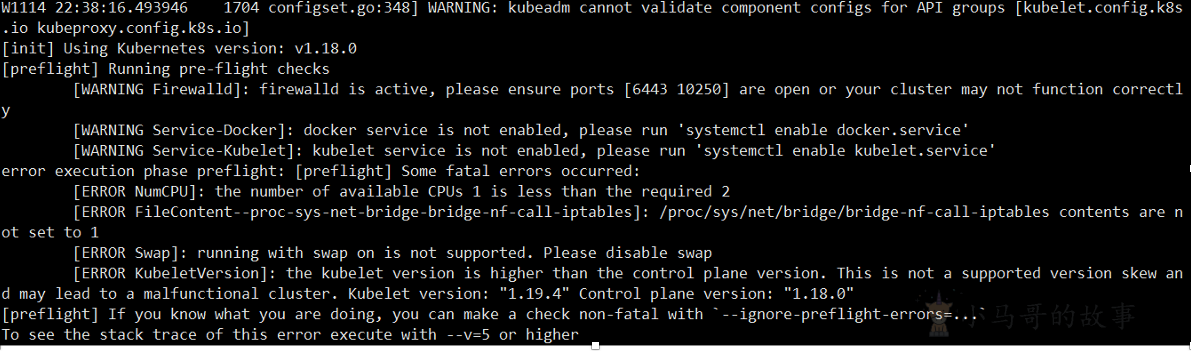

环境要求

-

一台或多台机器,操作系统CentOS 7.x-86_x64

-

硬件配置:内存2GB或2G+,CPU 2核或CPU 2核+;

-

集群内各个机器之间能相互通信;

-

集群内各个机器可以访问外网,需要拉取镜像;(非必须)

-

禁止swap分区;

如果环境不满足要求,会报错,比如:

环境准备

-

关闭防火墙

systemctl stop firewalld systemctl disable firewalld -

关闭selinux

sed -i 's/enforcing/disabled/' /etc/selinux/config #永久 setenforce 0 #临时 -

关闭swap(k8s禁止虚拟内存以提高性能)

sed -ri 's/.*swap.*/#&/' /etc/fstab #永久 swapoff -a #临时 -

在master添加hosts

cat >> /etc/hosts << EOF 172.16.45.131 k8smaster 172.16.45.132 k8snode1 172.16.45.133 k8snode2 EOF -

设置网桥参数

systemctl stop firewalld systemctl disable firewalld -

时间同步

systemctl stop firewalld systemctl disable firewalld

安装步骤

所有服务器节点安装 Docker/kubeadm/kubelet/kubectl

Kubernetes 默认容器运行环境是Docker,因此首先需要安装Docker;

安装 Docker

#更新docker的yum源

yum install wget -y

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

#安装指定版本的docker:

yum install docker-ce-19.03.13 -y

#yum install docker -y (这个安装的Docker版本偏旧) 1.13.x

#配置加速器加速下载 登录该网址获取加速地址(https://cr.console.aliyun.com/)

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://bz93554o.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

#然后执行以下命令不然会提示警告;

systemctl enable docker.service

#那么接下来需要搭建:kubeadm、kubelet、kubectl

配置k8s的阿里云YUM源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

到时候下载k8s的相关组件才能找到下载源;

安装 kubeadm,kubelet 和 kubectl

yum install kubelet-1.19.4 kubeadm-1.19.4 kubectl-1.19.4 -y

#然后执行以下命令不然会提示警告;

systemctl enable kubelet.service

#查看有没有安装:

yum list installed | grep kubelet

yum list installed | grep kubeadm

yum list installed | grep kubectl

#查看安装的版本:

kubelet --version

Kubelet:运行在cluster所有节点上,负责启动POD和容器;

Kubeadm:用于初始化cluster的一个工具;

Kubectl:kubectl是kubenetes命令行工具,通过kubectl可以部署和管理应用,查看各种资源,创建,删除和更新组件;

切记:此时应该重启一下centos;

切记:此时应该重启一下centos;

切记:此时应该重启一下centos;

重要的事情说三遍!!!

部署Master主节点

在master机器上执行以下命令;

kubeadm init --apiserver-advertise-address=172.16.45.131 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.19.4 --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16

说明:

service-cidr 的选取不能和PodCIDR及本机网络有重叠或者冲突,一般可以选择一个本机网络和PodCIDR都没有用到的私网地址段,比如PODCIDR使用10.244.0.0/16, 那么service cidr可以选择10.96.0.0/12,网络无重叠冲突即可;

执行成功后会显示以下信息

[root@k8smaster ~]# kubeadm init --apiserver-advertise-address=172.16.45.131 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.19.4 --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16

W0602 21:10:27.153412 8853 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.19.4

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8smaster kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 172.16.45.131]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8smaster localhost] and IPs [172.16.45.131 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8smaster localhost] and IPs [172.16.45.131 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 14.502742 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.19" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8smaster as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node k8smaster as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: uk5qox.2z2gpcq1qtjl7hlr

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 172.16.45.131:6443 --token uk5qox.2z2gpcq1qtjl7hlr \

--discovery-token-ca-cert-hash sha256:ce6240b7d71a93309c46e99d450028d2d36fcb508960c557e6ce56bfaf0b1c58

接下来在master机器上继续执行:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

查看节点

kubectl get nodes

部署Node节点

kubeadm join 172.16.45.131:6443 --token uk5qox.2z2gpcq1qtjl7hlr \

--discovery-token-ca-cert-hash sha256:ce6240b7d71a93309c46e99d450028d2d36fcb508960c557e6ce56bfaf0b1c58

成功后会显示以下信息

[root@k8snode1 ~]# kubeadm join 172.16.45.131:6443 --token uk5qox.2z2gpcq1qtjl7hlr \

> --discovery-token-ca-cert-hash sha256:ce6240b7d71a93309c46e99d450028d2d36fcb508960c557e6ce56bfaf0b1c58

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

此时在master上查看节点显示以下信息说明加入成功

[root@k8smaster ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster NotReady master 172m v1.19.4

k8snode1 NotReady <none> 120m v1.19.4

k8snode2 NotReady <none> 120m v1.19.4

部署网络插件

下载kube-flannel.yml文件

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

应用kube-flannel.yml文件得到运行时容器

#在master机器上执行

kubectl apply -f kube-flannel.yml

[root@k8smaster ~]# kubectl apply -f kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

#(稍等几分钟后) 查看节点信息 会看见状态发生变化

[root@k8smaster ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster Ready master 172m v1.19.4

k8snode1 Ready <none> 120m v1.19.4

k8snode2 Ready <none> 120m v1.19.4

至此我们的k8s环境就搭建好了;

查看运行时容器pod (一个pod里面运行了多个docker容器)

kubectl get pods -n kube-system

部署容器化应用

#部署nginx

kubectl create deployment nginx --image=nginx

kubectl expose deployment nginx --port=80 --type=NodePort

kubectl get pod,svc

#访问地址:http://NodeIP:Port

#部署Tomcat:

kubectl create deployment tomcat --image=tomcat

kubectl expose deployment tomcat --port=8080 --type=NodePort

#访问地址:http://NodeIP:Port

K8s部署微服务

1、项目打包(jar、war)-->可以采用一些工具git、maven、jenkins

2、制作Dockerfile文件,生成镜像;

3、kubectl create deployment nginx --image= 你的镜像

4、你的springboot就部署好了,是以docker容器的方式运行在pod里面的;

本文由 小马哥 创作,采用 知识共享署名4.0 国际许可协议进行许可

本站文章除注明转载/出处外,均为本站原创或翻译,转载前请务必署名

最后编辑时间为:

2021/09/03 02:59

"show tables;"

不错,多谢大佬,跟着做确实好使 避免不少坑